Accelerating Discovery — How GraphRAG Drives ROI in R&D and Knowledge-Intensive Workflows

This article explains how GraphRAG, built on Graphwise’s graph-native platform, helps organizations cut search time, speed up discovery, and turn AI pilots into measurable ROI across research and knowledge-intensive workflows.

Companies are competing to innovate faster, but success does not go to the company with the most data. Still, to the team that can turn data into actionable knowledge, find the right evidence, pattern, or prior decision, at exactly the moment it is needed.

Yet most researchers face challenges not because they lack information, but because they struggle to keep track of insights from different formats, systems, and disciplines. A report shows that knowledge workers spend 30% of their time searching, filtering, and revalidating information spread across disconnected tools and repositories (silos).

Many companies use retrieval-augmented generation (RAG) to search through documents and systems, but they are reaching their limit for complex problems.

To address this, GraphRAG enters the space. It turns scattered documents into connected knowledge and helps organizations cut research time, reduce busywork, and enable AI from a prototyping expense to an ROI driver.

In this article, we will cover the limitations of traditional RAG in complex R&D discovery. We will also discuss how GraphRAG connects entities and evidence to enable traceable reasoning, shorter research cycles, and faster decisions.

Why traditional RAG isn’t enough for complex discovery

Standard RAG uses vector search. It takes documents, splits them into small pieces, turns those pieces into mathematical vectors, and then retrieves the nearest neighbor (closest) when a user asks a question.

While this works for basic Q&A, it shows limitations in research-intensive workflows for two reasons.

The “chunking” blind spot

Vector search retrieves similar text chunks, but it does not understand entities (molecules, customers, chemical compounds, assets). It also does not comprehend the relationships between these entities (causes, inhibits, dependencies, or side-effects).

If a healthcare researcher asks, “How does this specific protein interact with our current pipeline of inhibitors across all trials since 2018?” The traditional RAG may grab snippets that mention the protein name. But it will not understand the logic of the trials and will not provide a synthesized answer.

Shallow context and hallucination

Traditional RAG often produces snippet collages, answers that look correct because they use the right keywords, but miss the edge cases, long-range relationships critical for discovery. And that is unacceptable in high-stakes R&D, healthcare, or regulated industries.

Without a structured understanding of the data, the LLM is likely to hallucinate (plausible-sounding) connections or miss weak signals hidden across multiple documents.

What GraphRAG changes: from documents to connected knowledge

GraphRAG reframes retrieval as a graph problem rather than a nearest-neighbor search problem.

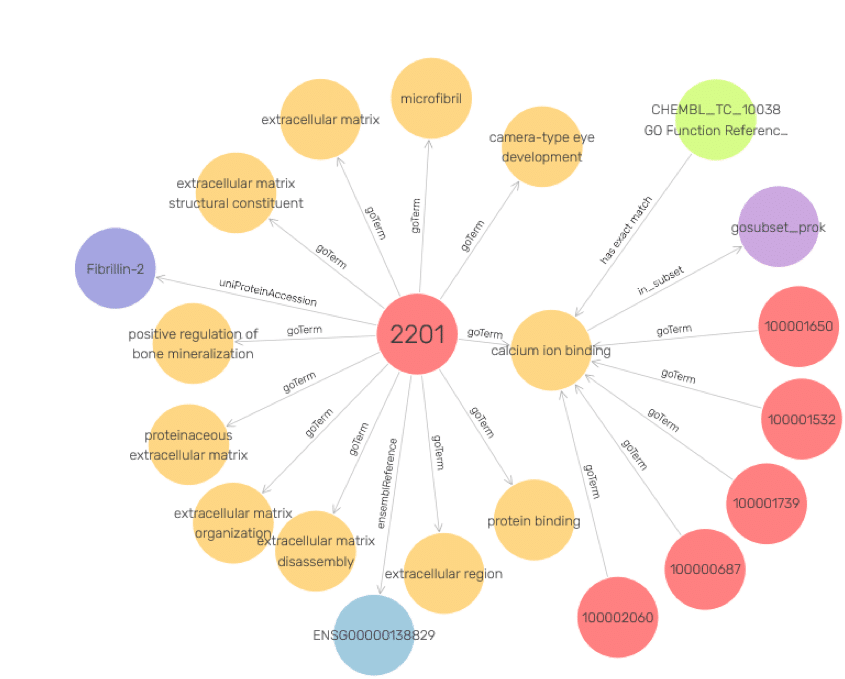

Instead of treating content purely as text, it builds a knowledge graph that encodes entities (such as drugs, materials, customers, assets, or policies), their attributes, and the relationships between them. Language models then query and interpret that graph to retrieve the most relevant subgraphs, including chains of related experiments, supporting evidence, constraints, and precedents.

The graph approach helps entity- and relationship-aware retrieval. For example, a researcher investigating a manufacturing defect can easily identify similar cases, whether using different terminology or the same type of failure occurring under similar conditions, even across different documents and formats.

Similarly, an R&D leader can ask which projects may be impacted by a new regulatory ruling and receive a graph-grounded answer that connects regulations, products, markets, and historical assessments.

GraphRAG composes answers that preserve context, cite sources instead of a collage of snippets, and explain how different pieces of evidence fit together.

How GraphRAG drives ROI in R&D and knowledge-intensive work

The transition to GraphRAG brings a financial imperative. The return on investment is delivered across the following key pillars:

Operational efficiency gains

GraphRAG delivers 15-20% efficiency gains in everyday knowledge work. Organizations can increase their R&D capacity without hiring a single additional researcher by reducing the time spent triaging documents and searching for lost insights.

Maintenance and implementation savings

The hidden costs of AI are the “human-in-the-loop” requirement to correct errors and manage data pipelines. Because GraphRAG provides more accurate, fact-based summaries from the start, it can cut LLM implementation and maintenance costs by up to 70%. You spend less time fixing the AI and more time using it.

Shorter research cycles

Checking existing information can take weeks in traditional R&D, but with GraphRAG, it takes hours. Research teams can quickly align on what we already know, preventing duplicate work and allowing for more targeted experimentation. When you consider faster research cycles, lower maintenance costs, and faster time-to-insight, organizations using GraphRAG see up to a 3× return on their AI investment.

Leveraging institutional memory

Large organizations are facing brain drain. This is the loss of knowledge when a senior researcher leaves, or a project is mothballed. GraphRAG ensures that past failures and successes are not hidden in archived folders but are active participants in the current knowledge network.

Strategic and market intelligence

GraphRAG transforms how strategy and risk teams work by combining external information (news, patent filings, market reports) with internal documents. It helps organizations get a complete picture of their environment. They can detect weak signals (small changes), like when a competitor makes slight adjustments to their patent strategy, that might be missed by regular searches.

How Graphwise’s GraphRAG platform accelerates discovery

Turning GraphRAG from an idea to production requires a strong knowledge graph, semantic modeling expertise, and a scalable retrieval pipeline capable of handling real-world complexity.

Graphwise addresses this need through enterprise-grade graph infrastructure and semantic modeling that turn fragmented content into AI-ready knowledge, at scale.

Graphwise GraphDB manages complex, interconnected data. And its modeling tooling and services help teams design ontologies for their specific domains. These ontologies capture the concepts and relationships that matter to the business and ensure that AI understands how the organization itself thinks about its knowledge.

Graphwise then connects this semantic backbone to a GraphRAG layer that links LLMs with graph-native retrieval. This lets assistants go through millions or billions of data points, reason over entity relationships, and return context-aware, explainable answers in natural language.

Graphwise can scale to multimillion-record knowledge graphs and supports multilingual content for global organizations with research sites, customers, and regulators across regions. It reduces integration overhead and accelerates time-to-value by packaging knowledge graph infrastructure, semantic modeling, and GraphRAG pipelines together.

Real-world success story: NuMedii & accelerating drug discovery

NuMedii is a biotech company focused on AI-driven drug discovery for new therapies for complex diseases. They faced a challenge common to many R&D organizations. Critical information existed across dozens of databases, each with different schemas and terminologies. Without a unified structure, finding relevant connections and generating reliable hypotheses required extensive manual integration and expert curation.

NuMedii used Graphwise’s GraphDB to build a knowledge graph comprising 7.98 billion triples (data points). This graph integrated over 20 different public and proprietary databases into a single, semantic network.

Graphwise reduced NuMedii’s months of manual work, going through each document, to days of graph-powered discovery. It helps NuMedii find hidden links between biomedical concepts and test new therapeutic hypotheses much faster and confidently.

Conclusion

When we view GraphRAG narrowly, it may look like a smarter search layer for LLMs. But it’s the innovation wheel that compounds the value of an organization’s knowledge. It eliminates the discovery tax and reduces hallucinations by connecting the dots between fragmented data sources.

Organizations that bridge the gap between “having data” and “having connected, navigable knowledge” will consistently out-innovate their peers. For research teams, it leads to fewer blind alleys, better reuse of hard-won insights, and faster movement from idea to a validated result.

Ready to see how GraphRAG can drive a 3× return on your AI investment?