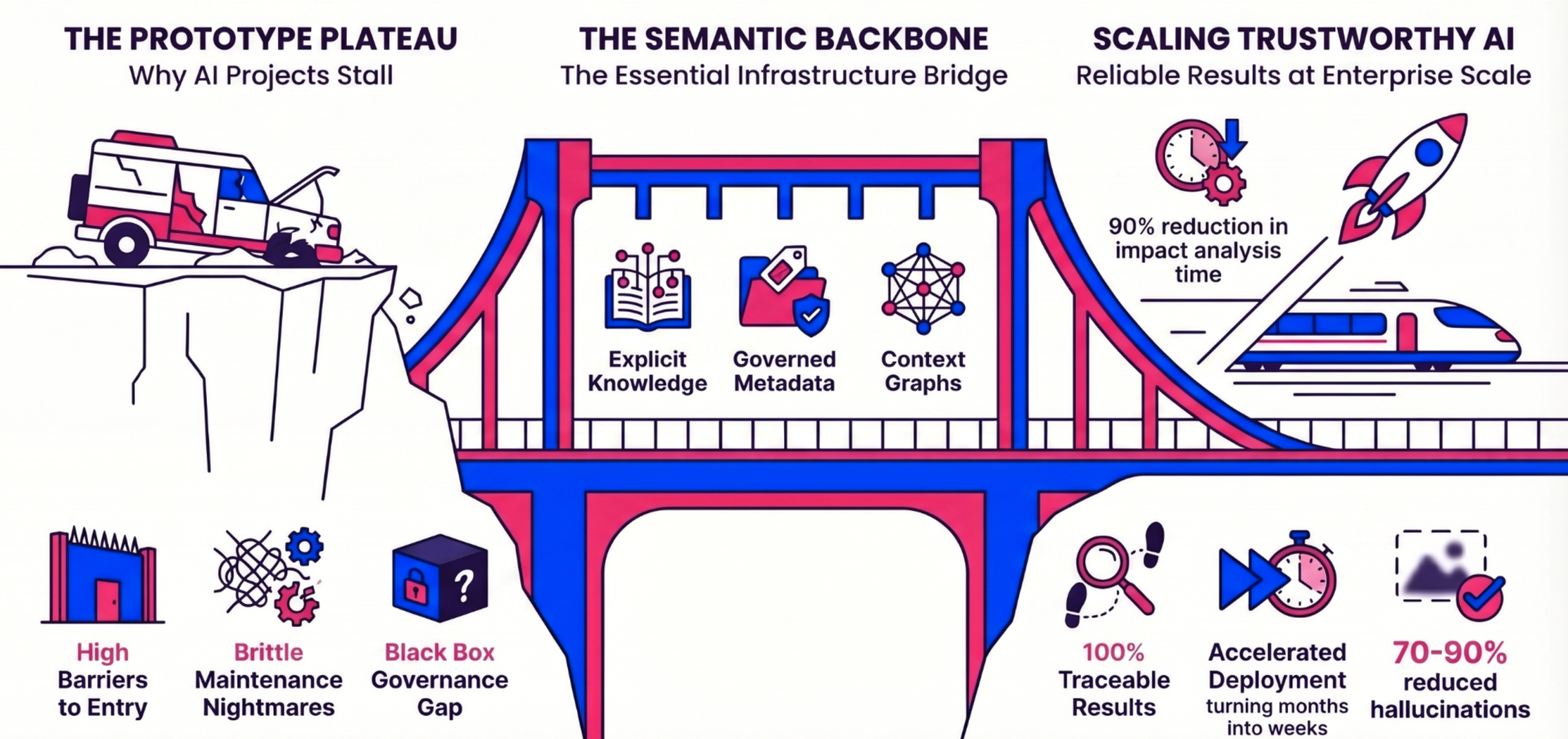

As enterprises rush to deploy GenAI, many hit a frustrating barrier: the “Prototype Plateau“. AI solutions that look magical in controlled demos often fail to scale, producing confident hallucinations because they lack a deep understanding of complex, proprietary enterprise data.

To move from experimentation to scalable, trustworthy AI, organizations require a new foundational infrastructure. This infrastructure is the Semantic Backbone.

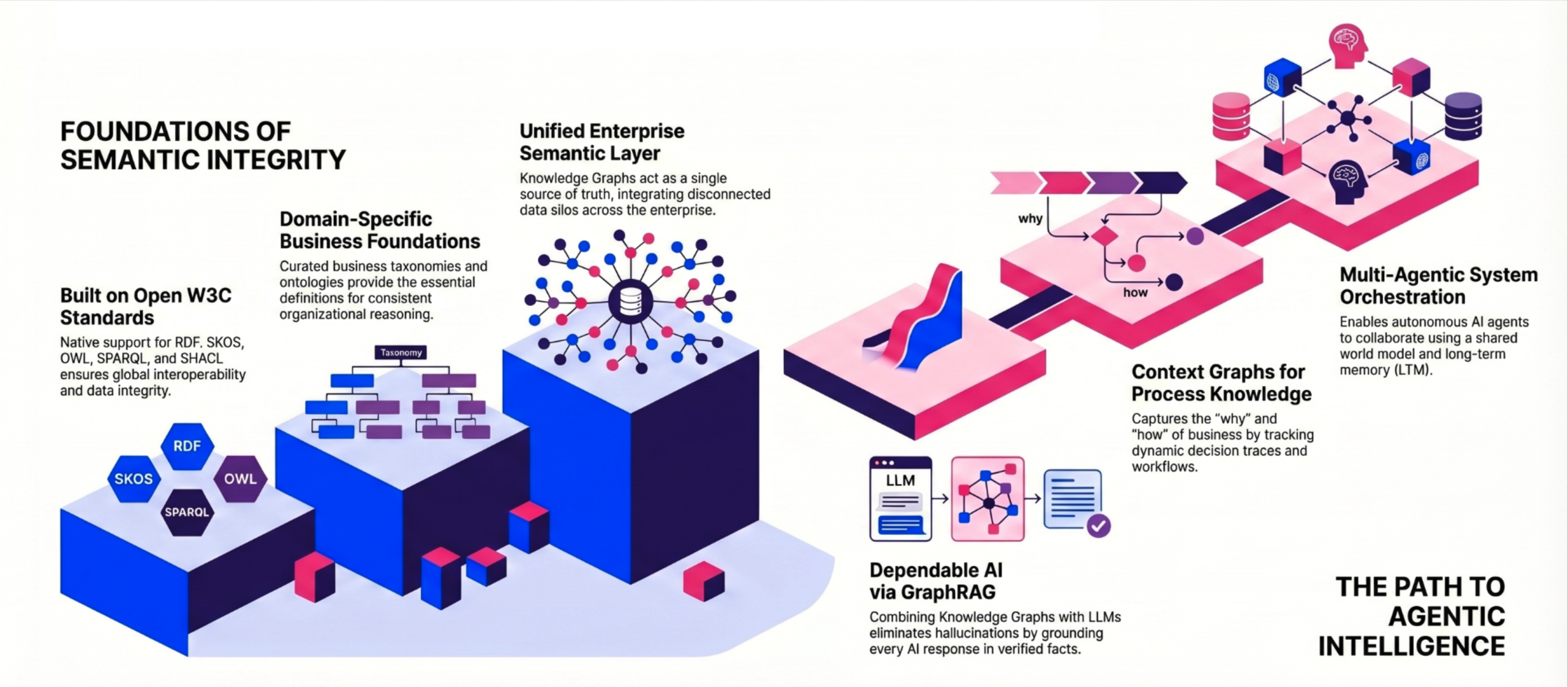

At its core, a Semantic Backbone is an Enterprise Knowledge Graph (EKG) coupled with strict, machine-readable governance models (taxonomies and ontologies). It acts as the “semantic nervous system” of an organization, providing a comprehensive source of truth and a strict “contract on meaning” that unifies fragmented data silos.

The Semantic Backbone vs. a “thin” semantic layer

Serving as the authoritative “nervous system” for data-driven enterprises, a semantic backbone provides a tool-agnostic, machine-readable map of business logic and entity relationships that surpasses the simple field-mapping of “thin” layers to enable multi-agent AI systems to navigate complex data with a shared mental model and high precision. In contrast, the term “semantic layer” is frequently used in the data and Business Intelligence (BI) communities, but it is often interpreted ambiguously.

A typical “thin” semantic layer acts as a wrapper or an afterthought — a simple “bag of mappings” built for a single purpose, such as ensuring consistent KPIs for a specific BI dashboard.

In contrast, a Semantic Backbone is developed and used as critical infrastructure. It is a tangible, enterprise-wide asset that serves as the comprehensive source of truth for the entire organization. Instead of serving just one downstream tool, the Semantic Backbone simultaneously powers multiple applications, acting as a domain knowledge hub, a metadata hub for data publishing, a digital twin model, and the contextual grounding layer for Agentic AI.

Why a Semantic Backbone is critical for multi-agentic systems

As AI evolves from simple chat interfaces to autonomous, multi-agent systems, the need for a Semantic Backbone becomes non-negotiable. Without it, uncoordinated AI agents operate in silos, leading to operational chaos and “agent sprawl”.

A Semantic Backbone empowers Agentic AI by providing:

- A shared world model: It gives diverse agents a unified understanding of the enterprise domain, allowing a financial agent and a supply chain agent to collaborate using the exact same definitions.

- Long-term memory and context graphs: While standard knowledge graphs act as a static “Map” of facts only, the Semantic Backbone adds a context graph on top. Context graphs act as a “dashcam,” capturing dynamic decision traces, procedural logic, and event traces. This allows autonomous agents to understand not just what happened, but how and why a decision was made, enabling them to simulate and optimize workflows over time.

- Autonomous Navigation: Agents need to “crawl” data to find answers. A thin semantic layer is too fragile for this; a semantic backbone provides a machine-readable map (often via a Knowledge Graph) that allows agents to understand how to join disparate datasets autonomously without human intervention.

- Reduced Hallucination: By providing a strict layer of business logic, agents no longer have to “guess” how to calculate complex metrics. They query the backbone for the definition, ensuring the output is grounded in governed logic rather than probabilistic patterns

- Actionable Context: Multi-agent systems often perform tasks (e.g., “Adjust the credit limit for high-risk users”). The backbone defines the constraints and dependencies of these actions, ensuring agents don’t violate business rules or data integrity during execution.

Why RDF outperforms LPG for an enterprise-wide Semantic Backbone

When organizations decide to build an Enterprise Knowledge Graph to serve as their Semantic Backbone, they must choose between two primary graph data models: the Resource Description Framework (RDF) and the Labeled Property Graph (LPG).

While LPG databases are popular for basic graph applications, RDF is fundamentally required to build a true, enterprise-wide Semantic Backbone capable of supporting trustworthy, agentic AI.

Here you’ll discover why RDF is significantly superior to LPG in this critical business function, and how LPG’s shortcomings lead to ever-higher costs as use cases become more complex and comprehensive:

Formal semantics and open interoperability

RDF is built entirely upon open W3C Semantic Web standards (such as OWL and SKOS) and utilizes globally unique identifiers. This provides an explicit, unambiguous “contract on meaning” that ensures high cross-platform interoperability and allows enterprises to seamlessly reuse domain knowledge across disparate systems. In contrast, LPGs are designed primarily for graph analytics, not knowledge representation. The LPG world lacks schema standards and shared ontologies, resulting in low semantic interoperability, isolated data silos, and vendor lock-in.

Native logical reasoning

A true Semantic Backbone does not just store data; it must understand it and deduce new facts. RDF engines feature native logical inference, allowing them to perform 100% correct, multi-hop reasoning across billions of entities. This semantic reasoning is executed much faster and cheaper than asking an LLM to reason probabilistically. LPG engines, on the other hand, lack formal logical reasoning capabilities entirely, relying instead on ad-hoc, procedural queries written by developers.

Built-In governance and data validation

To deploy AI in production safely, organizations require strict data quality and governance guardrails. RDF explicitly supports automatic semantic validation using standard constraint languages like SHACL, which enforces business rules directly within the graph and guarantees that bad data never enters your AI workflow. LPG architectures natively lack these semantic validation mechanisms and lack the tooling necessary for rigorous quality monitoring.

Explainability for high-stakes AI

Because RDF explicitly models domain knowledge, context, and business rules, it provides fully explainable traversals and traceable inference paths. This makes RDF the superior choice for regulated, high-stakes environments — such as Pharmaceutical R&D, compliance intelligence, and financial systems of record — where every AI decision must be transparent and strictly justified.

How to develop and govern a Semantic Backbone

Building a Semantic Backbone is not a rigid, one-size-fits-all academic exercise; it is an evolutionary process that must be tailored to an enterprise’s specific data sources, existing infrastructure, and target use cases. Over the past 20 years, Graphwise has developed robust, manageable, and highly scalable methodologies to guide organizations through this journey.

Depending on your primary business goals and the nature of your data, the development of a Semantic Backbone generally follows one of two distinct options:

Option 1: Focus on Knowledge Management

This option is ideal for document-heavy environments, such as scientific R&D, technical support, or compliance intelligence, where the goal is to extract meaning from vast amounts of unstructured text.

- Phase 1: Establishing the structural foundation

- Step 1: SKOS Taxonomy: Build a foundation using W3C standards (SKOS) to standardize terminology and resolve semantic ambiguity across the enterprise. Today, Graphwise accelerates this using AI-assisted tools to overcome the “cold start” problem and auto-generate baseline concepts.

- Step 2: Ontologies: Extend these basic hierarchies with complex, non-hierarchical relationships and formal business rules to enable deep, multi-hop reasoning.

- Step 3: Linked enterprise data: Unify fragmented data silos into a single source of truth through automated semantic enrichment and data validation.

- Phase 2: Deploying the intelligence layer

- Step 4: GraphRAG: Fuse the knowledge graph with LLMs to enable traceable, multi-hop reasoning without hallucinations.

- Step 5: Context graph: Capture the dynamic “dashcam” of decision traces and workflows to record exactly how actions unfold over time.

- Step 6: Multi-agentic systems: Orchestrate autonomous agents that can collaborate seamlessly using a shared world model and long-term memory.

Option 2: Focus on Digital Twins

This option is designed for operational, physical infrastructure — such as power grids, manufacturing floors, or smart buildings—where the primary data sources are sensor streams, system configurations, and physical assets.

- Phase 1: Constructing the digital twin

- Step 1: Industry ontologies: Instead of starting with a text-based taxonomy, you begin by modeling the physical infrastructure using established industry-standard ontologies, such as IEC CIM for electricity grids or Brick Schema for buildings.

- Step 2: Asset and relationship modeling: Define precise rules, thresholds, and connections between physical devices and operational systems.

- Step 3: Linked enterprise data: Unify fragmented Configuration Management Databases (CMDBs) into a dynamic, real-time knowledge graph digital twin.

- Phase 2: Activating agentic intelligence

- Step 4: GraphRAG implementation: Enable natural language queries, allowing users to get explainable, deterministic answers from the complex system using simple conversational language.

- Step 5: Context graph layering: Add dynamic event traces and decision flows directly to your static data map to capture the real-time state of the infrastructure.

- Step 6: Multi-agentic orchestration: Deploy autonomous AI agents to execute predictive maintenance and manage complex operational workflows with mission-critical trust.

Governing the Semantic Backbone: “Governance-as-code”

Regardless of the chosen option, strict governance is critical to ensuring the Semantic Backbone remains a trustworthy enterprise asset. Graphwise bakes governance directly into the graph layer.

By utilizing open W3C standards like SHACL, the Semantic Backbone automatically enforces data consistency, validates structural quality, and ensures that incomplete or logically flawed data never enters your AI workflow. This explicitly defined, machine-readable business logic acts as a strict guardrail, keeping multi-agentic systems 100% auditable and safe for production.

The case for a Semantic Backbone

Technical arguments

- Eliminates hallucinations: By grounding AI in explicitly defined, rule-based facts rather than probabilistic vector guessing, it delivers deterministic, 100% correct multi-hop inference.

- Schema Evolution vs. Prompt Drift: In prompt-engineered systems, a minor change in business logic requires updating every prompt in the system. With a Semantic Backbone, you update the Ontology once, and all connected agents immediately inherit the new logic without retraining or prompt rewriting.

- Semantic Consistency in Multi-Agent Systems: When multiple specialized agents collaborate, they need a “Shared Interlingua.” The Backbone provides a machine-readable vocabulary (Taxonomy) that ensures an “Order” in the Sales Agent is identical to the “Order” in the Fulfillment Agent.

- Accurate aggregations: LLMs are notoriously bad at counting or calculating. The Semantic Backbone translates natural language questions into precise database queries (SPARQL), returning mathematically accurate aggregations.

- Drastic token reduction: By shifting the reasoning workload into the graph itself, organizations can reduce LLM token consumption by up to 80%, alleviating massive computational costs.

- No vendor lock-in: Because it is built on open W3C Semantic Web standards (RDF, SKOS, OWL), enterprises retain true ownership and portability of their proprietary data models.

Business impact

- Radical cost reduction: A Semantic Backbone standardizes data into a machine-interpretable knowledge graph. This “Graph-ification” happens once, allowing dozens of different agents (Legal, Sales, R&D) to plug into the same source of truth. This reduces the marginal cost of deploying subsequent AI agents by up to 70% and eliminates redundant data engineering cycles.

- Accelerated time-to-value: Low-code GraphRAG workflows allow enterprises to move from prototypes to production-grade, highly accurate AI systems in weeks, not months.

- Audit-ready traceability: Every AI response is fully transparent and explainable, providing a clear audit trail back to the exact source documents — a mandatory requirement for highly regulated industries. This avoids multi-million dollar non-compliance fines and ensures the AI strategy is “compliant by design.”

- Active knowledge reuse and ROI: Breaking down data silos prevents redundant R&D and operational overlap. Knowledge Graphs are inherently designed to connect silos. A Semantic Backbone creates a “Digital Twin” of the organization’s logic, allowing agents to answer complex questions that span the entire value chain.

- Decoupling Knowledge from Models (Future-Proofing): When business logic is embedded in prompts or model-specific tuning, you are “locked in.” A Semantic Backbone keeps the Intelligence (the data and its relationships) independent of the Processor (the LLM). This lowers switching costs and ensures the organization can always adopt the most cost-effective or highest-performing LLM without rebuilding its knowledge base.

Top use cases powered by a Semantic Backbone

When you layer GraphRAG and Agentic AI on top of a Semantic Backbone, you unlock transformative enterprise use cases:

Semantic Digital Twins

Traditional digital twins mirror physical systems (like power grids, data centers, railway networks, or smart buildings) but lack inherent intelligence. A semantic digital twin uses the backbone to map dynamic dependencies and thresholds. If a sensor detects an anomaly, the semantic twin can trace the exact relationship between the failing component and the broader ecosystem, allowing for near-instant impact analysis and predictive maintenance.

Compliance Intelligence

In highly regulated sectors, dense legal texts require massive manual effort to interpret and map to internal controls. A Semantic Backbone drives compliance intelligence and transforms complex regulations into an intelligent map, allowing GenAI to automatically identify compliance gaps and provide instant, hallucination-free answers to regulatory questions. Because the graph retains data lineage, it generates live, transparent audit trails for regulators.

Scientific Knowledge Management

Modern R&D and engineering generate massive volumes of fragmented data across lab systems, codebases, and patents. A Semantic Backbone leverages scientific knowledge management and breaks down these silos, making data “FAIR” (Findable, Accessible, Interoperable, Reusable). It enables scientists to use multi-hop reasoning to discover hidden patterns (for example, linking a symptom to a condition to a treatment) or allows field engineers to instantly surface conceptually similar past troubleshooting tickets, dramatically accelerating innovation and repair times.

Technical Knowledge Management

Enterprise expertise is currently fragmented across specialized tools ((C)CMS’s, CRM’s, wikis, code repositories, ticketing systems and so on). This results in a distributed landscape where valuable data is trapped in silos, and teams are left without a single, unified view of their own intelligence. To combat digital sprawl, organizations need technical knowledge management based on a Semantic Backbone to unify data and protect intellectual capital without disrupting workflows.